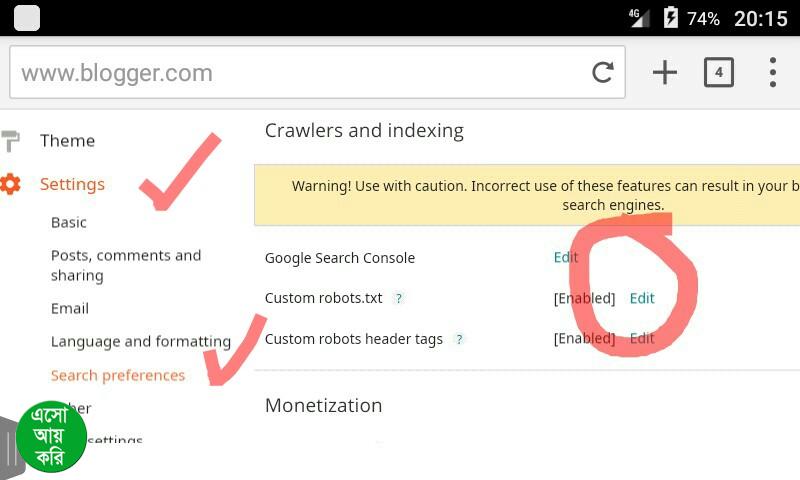

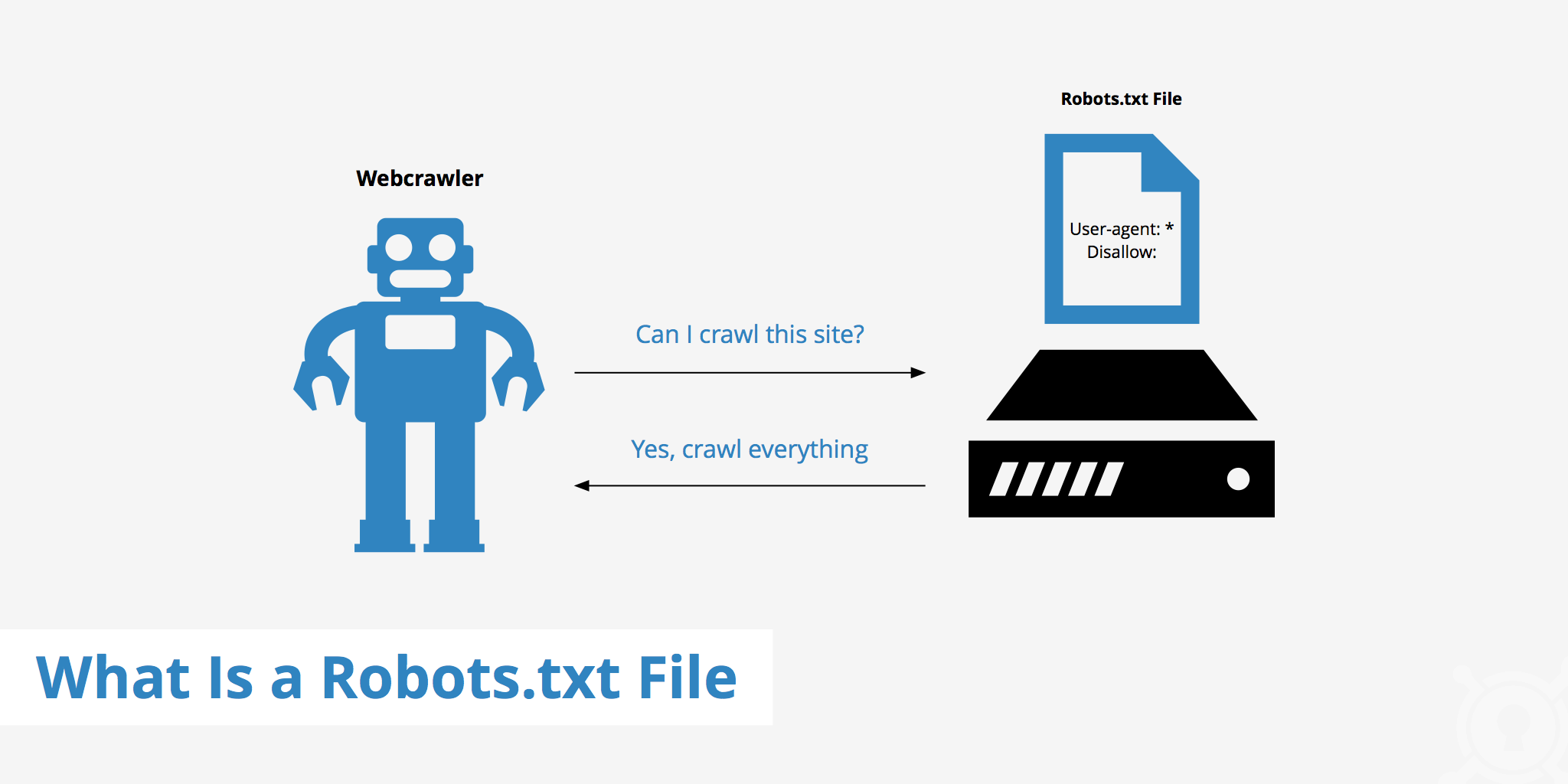

Don’t just rely on this, submit it directly to Google Search Console. This should be done at the bottom of the robots.txt file. Include the location of your domain’s sitemap.This means that both and should have their own robots.txt files (at /robots.txt and /robots.txt). Each subdomain on a root domain uses separate robots.txt files.While Google isn’t the only player in the search engine game, they dominate it. When creating a robots.txt file, go to the source.Anyone can see what pages you want to allow or disallow. Do not use robot.txt files in an attempt to hide private user information.It’s case sensitive: when creating a robots.txt file, be sure to name it “robots.txt”.This is where web bots begin their crawling. The robots.txt file must be placed in a website’s top-level directory.For a masterclass in how specific and comprehensive a robots.txt file can be, check out the robots.txt file. If your site is having security issues involving bots, there companies that offer security features in this realm.Īs with all things SEO, it’s best to take a best practices approach while the search engine overlords do their bidding. Robots.txt files act more as suggestions than laws. These bots tend to fall under the sinister bot category that includes malware, email harvesting, and spambots – again, not unlike the National Do Not Call Registry. It is worth noting that not all web robots adhere to robots.txt crawl instructions – not unlike the National Do Not Call Registry. Botching a robots.txt file can result in your site being deindexed, which would be a disaster. Generally speaking, you absolutely want web bots to crawl (and index) your site. The decision to disallow certain pages can be advantageous when it comes to SEO. When multiple user-agents exist, each user-agent will only follow the allows and disallows that are specific to them. Instructions can be included for multiple user-agents, allows, disallows, crawl-delays, sitemap location, etc. However, robots.txt files can get far more specific depending on the demands of various websites. This simple robots.txt file gets the job done, and is fine in most cases. Word to the wise: don’t ever block your CSS or Javascript files. Allow: /wp-admin/admin-ajax.php (allows bots to fetch and render JavaScript, CSS, etc.).Disallow: /wp-admin / (prevents the admin page from being crawled).User-agent: * (allows access for all web bots).This is a clean robots.txt file that blocks very little. Here’s an example of a common WordPress robots.txt file: User-agent: * To illustrate how a robot.txt file works in a real-world application, we’ll show rather than tell. From there, the search engine bot will proceed accordingly. When a web bot is about to crawl a site, it first checks for a robots.txt file to see if there are any specific crawling instructions. This has proven to be a remarkably effective feat. They accomplish this by following links that spider from site to site, spanning the breadth and relevance of a particular subject. Search engines exist to crawl and index web content in order to offer it up to searchers based on implied intent.

Simply put, robots.txt tells web bots which pages to crawl and which pages not to crawl. The robots.txt file accomplishes this feat by specifying which user agents (web bots) can crawl the pages on your site, providing instructions that “allow” or “disallow” their behavior. Akin to a bouncer at a nightclub, robots.txt enables you to restrict access to certain areas of the site or block certain bots entirely. Robots.txt is the part of this standard that allows you to dictate which bots can access specific pages on a website. The robots exclusion standard was developed in the 1990s in an effort to control the ways that web bots could interact with websites.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed